Neural Networks as Entropic Systems: Applications in Digital Pathology

Neural Networks as Entropic Systems: Applications in Digital Pathology

Leyva, A.; Niazi, M. K. K.

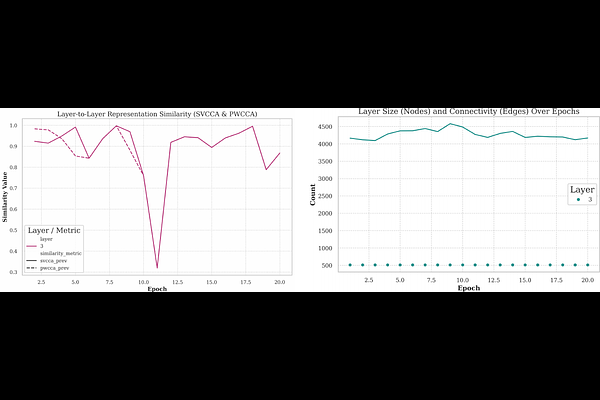

AbstractDeep learning systems in digital pathology are widely regarded as opaque, limiting clinical trust and interpretability. We present a framework for empirically characterizing training-time learning dynamics in neural networks by directly measuring activation structure, weight evolution, and spectral organization during optimization. Using TCGA BRCA whole-slide images with replication-timing derived methylation proxies as regression targets, we trained a Vision Transformer and tracked its intra-epoch behavior across 20 epochs. We observed reproducible structural signatures during training. Correlated groups of neurons formed stable activation modules whose modularity increased as training progressed, accompanied by a reduction in representation entropy of up to 60\%. Weight trajectories exhibited bounded diffusion with progressively reduced variance, consistent with a damped stochastic process, and converged toward a stable stationary regime in later epochs. In image space, model attention systematically shifted from collagen-rich stromal regions in early epochs to basophilic, proliferative nuclear regions in later epochs, aligning with known histologic correlates of replicative stress. These findings demonstrate that neural networks develop predictable, quantifiable internal structure during training that can be directly visualized and measured. Framing learning dynamics in terms of entropy, modular organization, and stochastic stabilization provides a practical, mechanistic lens for interpreting how pathology AI models acquire biologically meaningful representations.