Motion-Dependent Object Perception Reveals Limits of Current Video Neural Networks

Motion-Dependent Object Perception Reveals Limits of Current Video Neural Networks

Dunnhofer, M.; Uwisengeyimana, J. D. D.; Kar, K.

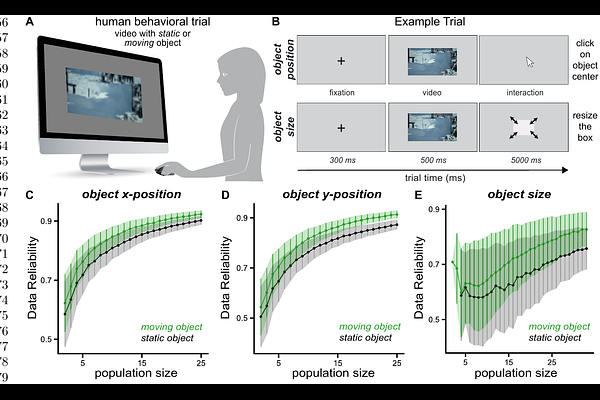

AbstractHow does motion contribute to robust object perception when appearance cues are unreliable? In natural scenes, camouflage, clutter, and occlusion can obscure object boundaries in static images, yet humans often resolve these ambiguities once objects move. Here we ask whether modern artificial vision systems capture this motion-dependent computation and whether their internal representations align with those used by biological vision. Using videos from the MOCA (Moving Camouflaged Animals) dataset, we introduce behavioral benchmarks that quantify the accuracy of object position and size estimation in scenes containing either static or moving objects. We first compare human observers with a diverse set of artificial neural networks. For static stimuli, image-based and video-based models achieve similar accuracy in predicting object position and size. However, humans show systematic improvements when objects are presented in motion. Image-based models do not exhibit this motion-dependent improvement, whereas several video-based architectures reproduce this behavioral pattern by integrating information across time. To examine the representational basis of these differences, we record neural population responses from the macaque inferior temporal (IT) cortex during presentation of the same stimuli. Models that more closely match IT representations also better reproduce human motion-dependent behavior. These results show that static accuracy alone is insufficient to evaluate models of visual perception and that alignment with primate visual representations provides a useful guide for developing models that capture dynamic computations in vision.