LAMBDA: A Prophage Detection Benchmark for Genomic Language Models

LAMBDA: A Prophage Detection Benchmark for Genomic Language Models

Lindsey, L. M.; Pershing, N. L.; Dufault-Thompson, K.; Gwak, H.-j.; Habib, A.; Schindler, A.; Rakheja, A.; Round, J.; Stephens, W. Z.; Blaschke, A. J.; Sundar, H.; Jiang, X.

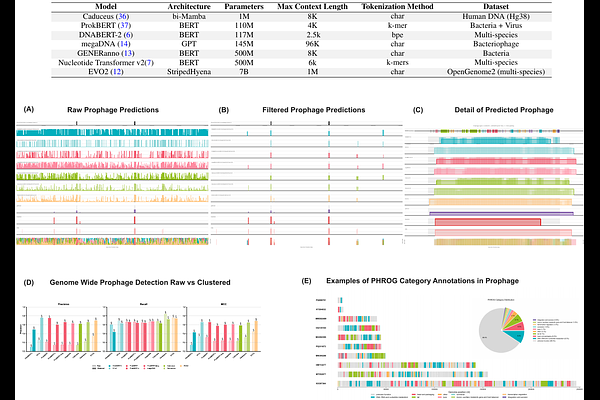

AbstractTransformer-based genomic sequence models represent an emerging frontier in computational biology. Yet, their embeddings have not yet shown the same level of predictive power as natural and protein language models, indicating a gap between current implementations and theoretical promise. Existing benchmarks for DNA language models primarily focus on classifying regulatory elements in eukaryotic genomes, leaving open the fundamental question of whether these models learn sequence-level features across whole genomes. We introduce LAMBDA, a benchmark designed to rigorously evaluate genome language model embeddings through phage-bacteria sequence discrimination across four categories of increasing complexity: probing tasks, fine-tuning assessments, diagnostic tests, and genome-wide prophage detection. Our comprehensive analysis of current genomic language models provides novel insights into the importance of training data quality relative to model size, the need for domain-specific training, and the application of genomic language models for detecting prophage sequences. This benchmark represents a challenging genomic annotation task in the bacterial domain and addresses a key computational problem with direct relevance to microbiology and medicine.