Improving genomic language model reliability under distribution shift

Improving genomic language model reliability under distribution shift

Hearne, G.; Refahi, M. S.; Polikar, R.; Rosen, G. L.

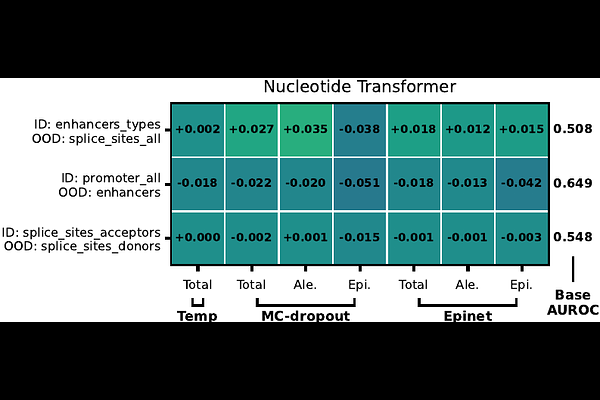

AbstractTransformer-based Genomic Language Models (GLMs) have achieved strong performance across diverse genomic prediction tasks. However, their tendency toward overconfident predictions---particularly on noisy or unfamiliar data---limits reliability. In genomics, where unknown species and novel variants are common, developing models robust to distribution shift is crucial for dependable predictions. Here, we analyze the impact of several common and novel uncertainty quantification (UQ) methods in the context of GLMs, evaluating their performance across diverse downstream genomic and metagenomic prediction tasks. Comparing model behavior on both in-distribution (ID) and out-of-distribution (OOD) data, we show that temperature scaling and epistemic neural networks are capable of improving classification reliability across multiple GLM architectures and domains. The software is available at: https://github.com/EESI/glm-epinet-pyt