Accelerating Quantum Tensor Network Simulations with Unified Path Variations and Non-Degenerate Batched Sampling

Accelerating Quantum Tensor Network Simulations with Unified Path Variations and Non-Degenerate Batched Sampling

Taylor Lee Patti, Paavai Pari, Yang Gao, Azzam Haidar, Thien Nguyen, Tom Lubowe, Daniel Lowell, Brucek Khailany

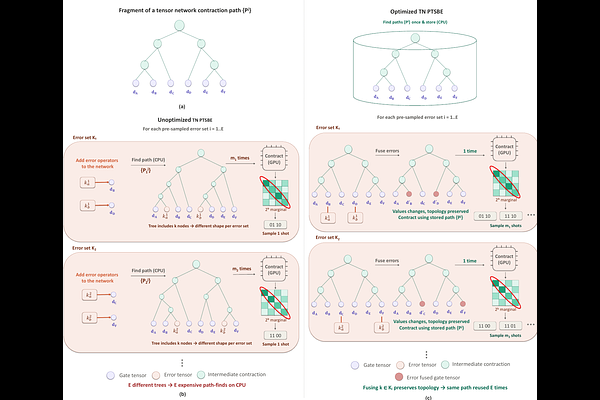

AbstractQuantum trajectory methods reduce the computational overhead of simulating noisy quantum systems, approximating them with $m$ stochastically sampled $2^n$-entry quantum statevectors rather than exact $2^{2n}$-entry density matrices. Recently, Pre-Trajectory Sampling with Batched Execution (PTSBE) has dramatically increased the data collection rate of these methods. While statevector PTSBE has demonstrated data collection speedups of over $10^6 \times$, tensor network implementations only achieved $\sim 15 \times$ speedup. This comparatively modest tensor network advantage stemmed from 1) contraction path recalculations, 2) sequential tensor network sampling, and 3) inflexible/unoptimized contraction hyperparameters. In this manuscript, we increase PTSBE's tensor network data collection rate to more than $10^8\times$ that of traditional trajectories methods by developing 1) error-independent unified path variation, 2) non-degenerate tensor network sampling, and 3) a flexible/optimized contraction framework. While our methods are particularly powerful for accelerating non-proportional sampling, we also demonstrate a more than $1000\times$ speedup for more general quantum simulations.